Home » Blog » analytics » Crowdsourced seismic sensors might save your life someday.

If you're new here, you may want to first register and subscribe to the RSS feed. Thanks for visiting!

Crowdsourced Seismic Sensors?

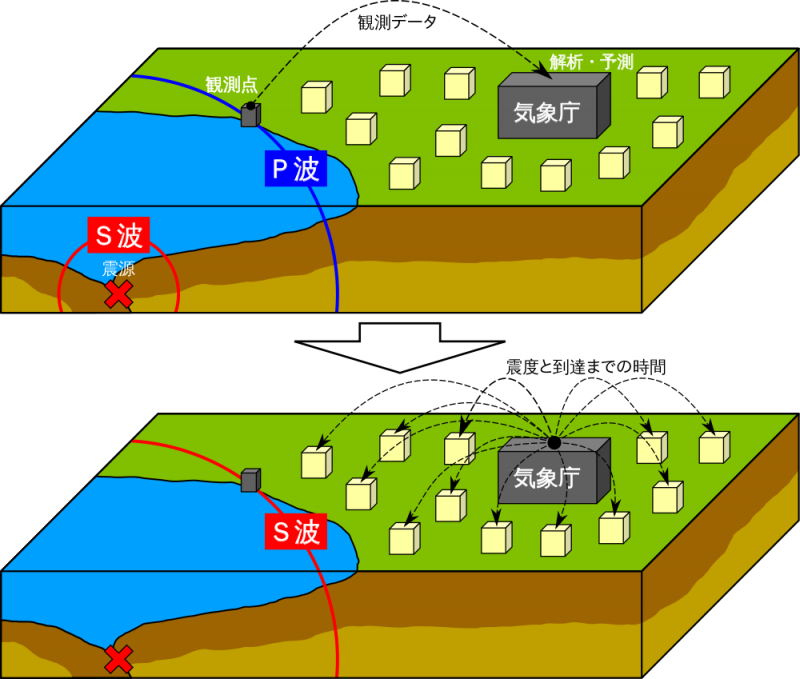

A frequent topic on this blog is the use of Arudino and crowdsourced technologies to address air quality issues. Can similar technologies be used adopted from air quality technologies to improve seismic predictions? It turns out the answer is yes.

Unless you’ve been living under a large rock these last few days, you’ve probably heard that Los Angeles was struck in the last two weeks by what the USGS describes as a “moderate” 5.1 earthquake with “light” fore and aftershocks of around 4.5. (The Saint Patrick’s Day foreshock trembler prompted our earlier article on robot-written newspaper articles , music, and movies.)

During that same period, there were similar or slightly quakes in Chile, Alaska, Greece, and Japan. And let’s not forget the 5.7 quake that struck DC back in 2011 to much mirth on Facebook. There was a significant difference between these quakes and the ones in Los Angeles: (1) they didn’t occur underneath a megapolis of some 13+ million people, and (2) they didn’t occur under one of the world’s major media capitals, where celebrities and publicists are conditioned, like Pavlov’s dog, to associate earthquakes with the salivating opportunity to tweet against a trending hashtag, emergency smartphone power at the ready, and (3) they didn’t have 100 aftershocks within a 24 hour period.

Using Analytics to Figure Out if It’s Time for Vegas, Baby

[Tweet: “LA quakes: Time to head for Vegas, Baby?”] Some of the tweets suggested Los Angelinos should beat a hasty retreat out of town. (Easier said than done for an area with 13 million citizens and serious traffic problems. Fortunately, most people aren’t following that advice, and our freeways remain open.) But this is a blog we’re we’ve talked about data analysis. In fact, one of the slogans for our blog should be:So are we in danger? Is it time, as some tweets suggested, to visit Vegas again, so soon after just blogging about CES?

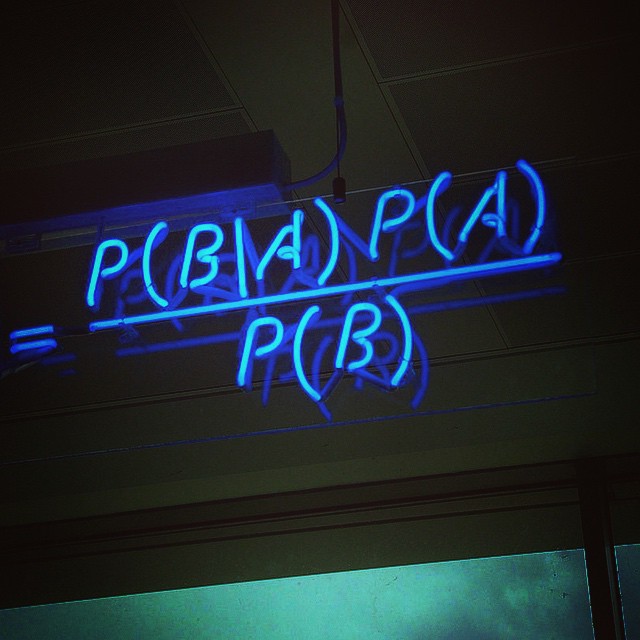

We’re going to do another blog article on why formal decision making using data is useful in overcoming known biases in human decision making. (Scary, media-exaggerated, life-and-death situations like these are rife with demonstrable decision-making bias). Nate Silver, in his best-selling book on big data analysis, spends an entire chapter on data overfitting and the hazards of earthquake prediction.

Earthquake prediction from data is not easy. There is a ton of data from seismographs, but like certain problems in economics or business, the underlying processes are very poor understood. It’s easy to overfit the data and come with a model that has “99% R^2” or some similar supposed statistical accolade, only to have in reality a very poor model that fits mainly noise and is not very predictive. Silver writes that many seismologists have fallen into this trap with bogus models that are able to “predict” the past but not the future. He explains this very clearly using simple analogies, and we’ll refer those readers that are interested to his book.

So what does work? Simple models making long-term “forecasts” (and not “predictions”, as the USGS insists the two are different) are quite predictive. For Los Angeles, looking at past quake history, the USGS estimates a “major” quake (defined as magnitude 6.5 or larger) as occurring once every 40 years (not as bad as a number of other heavily populated regions.) It does mean that quakes happen exactly every 40 years, or if one hasn’t happened in 40 years one is overdue; this is simply the longer term average. (Which the press has again gotten wrong in this most recent reporting. Just today a major newspaper reported that a certain LA fault produces a major quake every 2500 years, but no one knew when the last such quake was, so they weren’t sure if we were “overdue”. This is a statistical frequency; when the last quake was tells you nothing about tomorrow.)

OK, a major quake once every 40 years. That means on a given day Los Angeles has a one in 12,000 chance of a 6.5 quake or greater. (Of course, most of the city will survive that quake, thanks to modern earthquake construction codes and perhaps even a future earthquake early warning system, which we’ll get to in a bit. The USGS estimates the lifetime odds of a US citizen dying in an earthquake are 1-in-131,890. Obviously, those odds are higher for residents of California, but not much higher since 10% of the country lives here. 1 in 100 US Citizens will die in a motor vehicle accident, and at 1 in 100,000 a US resident is more likely to die from a snake bite or bee sting is more likely than dying from an earthquake according to the CDC.) So, even with 1 in 12,000 odds of a 6.5 quake striking on any given day, we’ll take Los Angeles, over, say, cities in rattlesnake country any time.

What about the aftershocks? Do they increase the odds? The big problem is that the underlying processes behind earthquakes are extremely poorly understood. Some models have described the earth as similar to a complex tangle of rubber bands. Pressures build up over geologic time due to plate tectonics. The rubber bands get stretched very slowly over many years, until, sudden, a bunch break all at once, and you have an earthquake. This may destabilize some of the remaining rubber bands, which may then break shortly thereafter, and you end up with fore- and aftershocks.

There are 4 comments so far

Leave a Comment

Don't worry. We never use your email for spam.Recent Comments

- florimee on genetic disease turns you into a real-life vampire

- Acculation on Alien Pioneer plaque starmap to 3D printed jewelry transmedia: maker movement data-driven multiplatform media

- Acculation on Free Video Data Science Assessment Tool

- Acculation on Free Business Advice Chatbot Product

- Acculation on Online Consultation with Dr. Krebs (Big Data and Management Consulting)

[…] can incorporate their schematics into your projects or products. Update: This means you could use AQEs as a platform to add wireless, crowdsourced seismic sensors as we suggest in this later blog po….) As their website points out, they done “no consumer testing” whatsoever. It’s […]

[…] case of Ebola and Bird Flu panics (both happening at this writing). We just wrote a blog article on big data analysis of earthquakes, and recommended only the cheapest and most-practical immediate crowdsourcing […]

[…] Acculation, Inc. and AQcalc in the news! | Acculation on Crowdsourced seismic sensors might save your life someday. […]

Goog article! Like )