Home » Blog » analytics » Watson from IBM: Why semantic text tech helps analytics » Page 4

If you're new here, you may want to first register and subscribe to the RSS feed. Thanks for visiting!

Thus, a computer system analyzing medical articles like Watson need not strictly limit itself to the human-readable text. It could parse the semi-structured citations data for each paragraph and sentence, and jump from that to previously human-annotated machine-readable MeSH terms for that cited article. From these MeSH terms, it could gain further insights into the meaning of the paragraph or sentence referencing the cited article. (Medical articles typically have a great many citations.) In addition to MeSH terms, there is the machine-readable Scientific Citation Index (and competitors) which seek to quantify the quality, importance, or influence of scientific articles by counting the numbers of times each has been cited in other scientific articles.

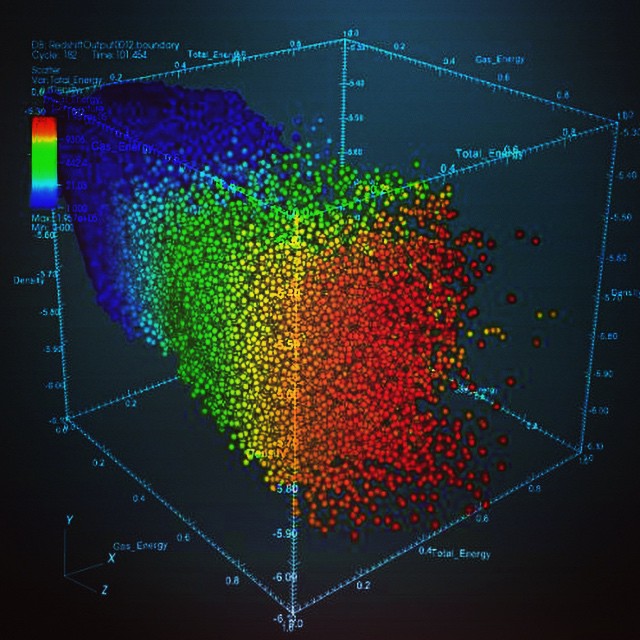

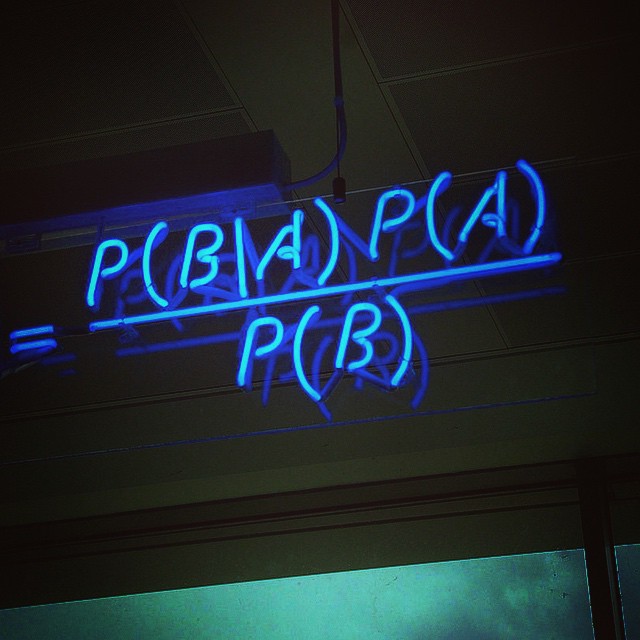

However outstanding Watson’s ability to process human-readable text is, it would be foolish for Watson not to use this already machine-readable data to gain additional inssights. Many other fields of scientific endeavor don’t yet have the extensive machine-readable subject annotations such as the MeSH terms that the NLM has painstaking assigned to each article. And other areas of scientific endeavor may rely far more on non-text to convey meaning, such as mathematical equations, theorems, computer code, tables or graphs. Many fields use far fewer citations owing to a much smaller body of relevant literature. Although Watson’s Jeopardy championship gives a clear hint, we won’t know how significant these differences are until there’s a Watson for pure mathematics or geophysical chemistry.

Of course,, these other fields lack the business model of physicians with the financial resources and a real need for a computer assistant. This makes medical articles a low-hanging fruit for both business and technical reasons.

We briefly suggested it perhaps wasn’t all that new of a technology. Recall we mentioned one of the machine-readable datums associated with a scientific article was a count of how often it had been cited in other articles. This has been used for many decades to attempt to quantify (in a crude way) the quality, popularity, or importance of scientific articles. It was sort of the PageRank of its day. (PageRank, named after Google founder Larry Page, was the original Google search engine algorithm.)

Origins of Google

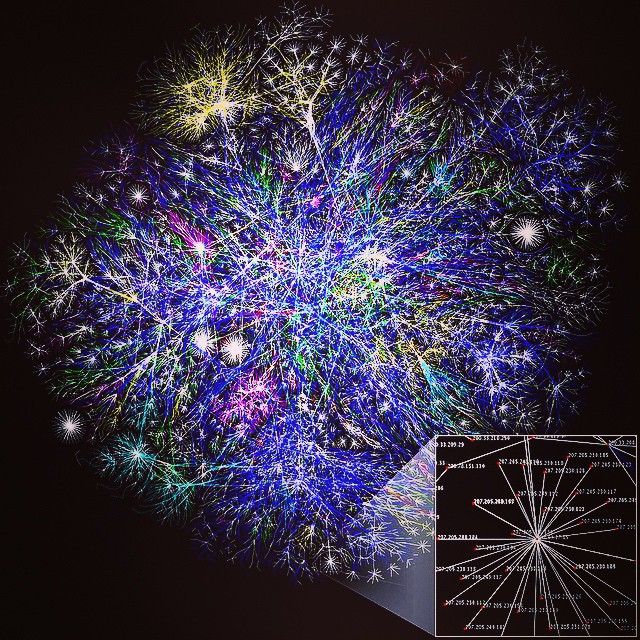

In fact, this is where Google got it’s start. Recall that Google was originally a PhD project at Stanford to help libraries keep track of scientific articles. Google’s founders, then Stanford PhD students, realized the number of times a web article was linked could be similar to the Scientific Citation Index. Thus, counting links provided a way to numerically score articles. (Prior to that, search engines mainly just looked at the keywords in each article.)

From the beginning, every Google search has been implicitly a question. They are a request for the most relevant information on a specific topic. You can even ask Google Jeopardy-like questions. (Well, you know we mean Jeopardy! answers, since questions are answers on Jeopardy!). Originally, those the topics of those questions were intended to be scientific, and the pages returned links to scientific articles.

Of course, Watson is obviously much better at solving Jeopardy! trivia than Google or Google Now. Watson wasn’t connected to the Internet during the Jeopardy challenge. (It had to rely on information it had already downloaded.) It didn’t return a web page, but rather the best sentence giving a concise, human-like solution to the trivia problem. It formulated these sentences on it’s on, by parsing the information in its databanks. (The game requires each solution to be in the grammatical form of a question. This additional twist proves Watson was generating its own, grammatically correct sentences, rather than merely mining existing sentences on web pages.)

Google can do something similar for some simple, common questions that appear to be preprogrammed (“What is the current time in Madrid?”). The search engine Wolfram Alpha that Apple’s Siri sometimes uses can answer an additional universe of questions that appear to be preprogrammed, together with solving some math problems by harnessing a Wolfram-Mathematica engine.

So Watson-like technology is already in products like Siri and Google Now. Of course, these didn’t exist (in their present sophistication) at the time IBM did its Jeopardy! Challenge. Both require access to the Internet to answer questions, and often take a much longer time to respond than Watson was permitted in competition. Often the responses are still lengthy web pages rather than the concise, accurate answers Watson was capable of generating.

So what is Watson, exactly?

Which brings us back to our original problem. What exactly is Watson?

If you visit the Watson developer ecosystem website, the general public only has access to some glossy marketing brochures and videos. These are high-concept and mostly say little about the underlying technology.

(The Wikipedia article on Watson isn’t that much more helpful. It lengthy mostly discusses the Jeopardy! stunt. We assume this is because so little else is known about the system. It does note a medical application in field trial for lung cancer diagnosis. 90% of the nurses in that field trial now rely on Watson’s judgement. The article cites Wolfram’s Alpha, which we mention above in connection with Apple’s Siri, as the main competitor.)

In recent months, IBM has started to explain a bit more, sort-of. They’ve announced and Watson app cloud. They’re still only letting a small number of companies in at the moment. (Apparently Elance is one of them.) Everyone else gets glossy marketing brochures and videos.

Eventually, it seems IBM will publish a public API to Watson, as well as provide a hosted cloud service for Watson-enabled apps, a la a the Google App Engine. (They’re taking sign-ups for people interested in the public announcement.)

One of the initial problems with Watson is that it apparently required a substantial up-front investment. This was in form of a state-of-the-art data warehouse and the staff to run that.

(Our initial suspicions that Watson was built on top of IBM DB/2 were quickly confirmed. In addition to significant software licensing costs, the last time we checked installing and maintaining a DB/2 installation was non-trivial. Developers used to free systems like MySQL may not realize it, but there are multi-million dollar R&D investments in proprietary fast join optimization and parallelization technologies that go into systems like Oracle, SQL Server, or DB/2. This, and the existence of legacy software, is why people put up with the maintenance expense of these systems.)

The Watson cloud

So setting a cloud ecosystem makes perfect sense. IBM will maintain the technology’s complex software and hardware stack. Developers can rent Watson instances a la an Amazon EC2 model. Companies can focus on writing innovative apps, not maintaining complex data warehouse hardware, software, and support staff. The barrier to entry for innovation drops from very substantial to near zero with the cloud-based model.

Elance is mentioned in IBM’s glossy video. We’ve already discussed why searching job descriptions and resumes in a “natural” way could be huge.

The missing technology for analytics and Internet of Things

Semantic text searching and improved natural language and unstructured data processing are the key missing ingredients for analytics and the Internet of Things. We’re sure to have more on to say on this key emerging technology in the future.

Related posts:

Search API will now always return "real" Twitter user IDs. The with_twitter_user_id parameter is no longer necessary. An era has ended. ^TS

— Twitter API (@twitterapi)November7, 2011

Search API will now always return "real" Twitter user IDs. The with_twitter_user_id parameter is no longer necessary. An era has ended. ^TS

— Twitter API (@twitterapi)November7, 2011

Search API will now always return "real" Twitter user IDs. The with_twitter_user_id parameter is no longer necessary. An era has ended. ^TS

— Twitter API (@twitterapi)November7, 2011

There are 7 comments so far

Leave a Comment

Don't worry. We never use your email for spam.Recent Comments

- florimee on genetic disease turns you into a real-life vampire

- Acculation on Alien Pioneer plaque starmap to 3D printed jewelry transmedia: maker movement data-driven multiplatform media

- Acculation on Free Video Data Science Assessment Tool

- Acculation on Free Business Advice Chatbot Product

- Acculation on Online Consultation with Dr. Krebs (Big Data and Management Consulting)

We were curious to know what the folks at IBM thought about some of our proposed uses for Watson, so we posted to the IBM developer forum. Will Sennett of IBM was kind of enough to write a detail response on the IBM site.

Here’s an excerpt: “I’d have to dig a bit more at the FBI assistant example … certainly solutions in the big data and analytics realm that are a great fit for government…. On the HR side, I think you’re spot on. In fact, one of our Watson Mobile Developer

[Waston] application difficulty and complexity is probably dependent on the data ….”

Read his full response on the IBM forum.

[…] recent articles on IBM Watson analytics and Google Glass generated a lot of interest with people contacting us privately to ask for advice […]

[…] are all the trolls on the Internet? We have done our best to tick people off in this blog. We skewered Google Glass. We did not have kind words for IBM Watson’s marketing department. We’ve even poked fun […]

[…] been a fan of IBM’s Watson semantic meaning analytics system since IBM first announced they were opening up their ecosystem. Around the time of CES we pointed […]

[…] up the topic of semantic text systems. In our earlier article from April, we mentioned a “bear in the woods” scenario. The idea there is that structured data, such as the forms used in hospital […]

[…] of our most read articles have been on IBM Watson, including suggestions & possible alternatives. We’ve pushed IBM several times to come up with better demos for […]

Twitter comments updated.