Home » Blog » Art » Ted Talks on IBM Watson & Bayes’ rule in evolution » Page 2

If you're new here, you may want to first register and subscribe to the RSS feed. Thanks for visiting!

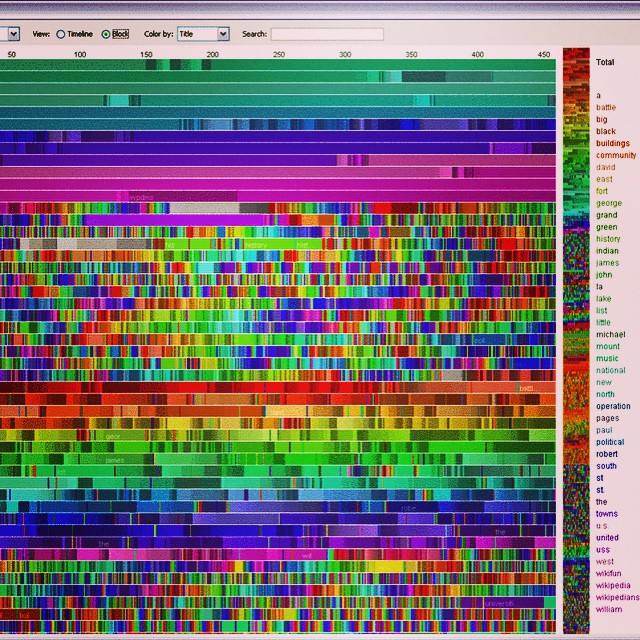

However, IBM Watson must do a great deal more to win Jeopardy! Finding a close match in Wikipedia between its text and a question is far from being the correct answer in many cases. IBM Watson looks at many other factors, such as the implied historical period of the question (modern medicine in Wikipedia would give the wrong answer to a question about medieval medicine). It has distance metrics for the popularity and authenticity of the data source. (Popular data sources aren’t always right. One example given are lengths of borders of South American countries, were a common fact frequently quoted by newspapers is, in fact, wrong.) When these different distance metrices are in conflict, it then applies machine learning to learn which metrics should dominate in any given answer.

The corollary, of course, is that much of the development time for a novel solution for Watson will be in writing code to compute distance metrics. For example, in our hypothetical FBI database Watson implementation, suppose a frequent use case was in matching partial license plates using natural language. Let’s say witnesses frequently said things like “license plate started with NQZ, the suspect had blue eyes, and last name sounded like Mike.” You could pay an in-demand SQL programmer to write complex SOUNDEX and Regex queries to access some database, and maybe come back with an answer several hours and several hundred dollars later. Or you can have IBM Watson or another natural language processing system (hopefully) figure out how to retrieve this information from your natural language query using much more computing power but presumably much faster and at lower total cost than the dedicated SQL programmer. In order to do that, however, Watson would probably need new distance metrics written for things the FBI (or witnesses) would commonly search for, such as (in our example) license plates or similar-sounded names. Basically, new distance metrics probably have to written for anything that doesn’t frequently come up in Jeopardy!

Not many questions involving similar license plates come up in Jeopardy!, although similar-sounding names might, so perhaps only one new metric has to be written in this example. This would create a new quantitate score that would compare two cases in the database exclusively on how similar their license plates are. A separate metric (or at least separate treatment) is needed, because similar or matching license plates between two cases is a qualitatively very different signal than some random text matching between the cases. The choice of metrics presumably re-imposes some structure on the resulting system and data interpretation. In a more realistic example, an experienced agent would guide the Watson engineers into creating new quantitatively metrics based on how they compare cases or suspects in real-life. They might create a metric that could compare two artists’ sketches, for example, or score how similar a sketch is with a photo. Machine learning would then take over to figure out how to integrate the different metrics in formulating responses to natural language questions. For example: Should similar license plate dominate when appearance are different?)

These considerations then get at the true cost of a Watson deployment. They also answer the question about the infrastructure that should be built out to develop a pre-Watson prototype: if the similarity you’re looking for isn’t asked for in Jeopardy!, write a custom distance metric for it.

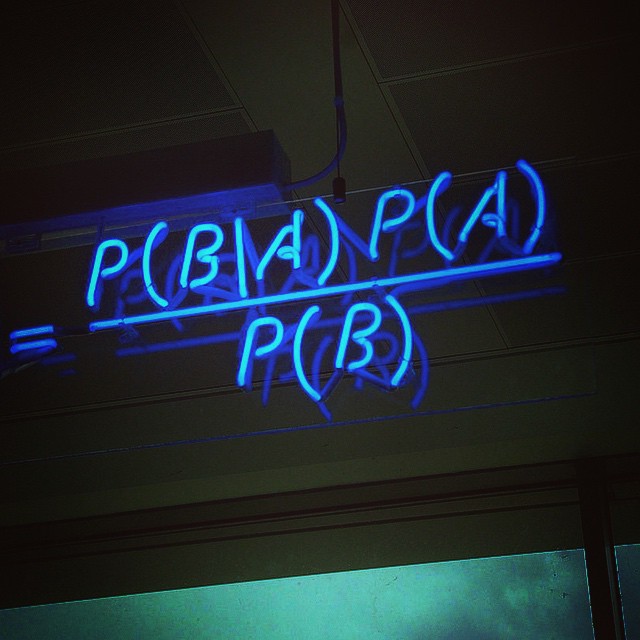

Photo: Wikimedia/mattbuck/cc-by-SA-3. Black light office art & artwork: our featured photo is Bayes’ theorem in neon. When this photo was originally published on our Instagram feed, we used it to wrap up our final set of clues in our reader’s puzzle on the relationship between ostriches, Aristotle and data science.

This is Bayes’ theorem from statistics (and data science) spelled out in blue neon at the Cambridge, UK offices of data science firm Autonomy. (Apologies to the frequentists or should we say frequentistas among our readers.. This supposedly rival but in reality complementary branch of statistics is in holy war against Bayesians. We’re being satirical here in a nod to today’s (Jan 8, 2015’s) tragic events. Even bloggers have been targeted by dictators and fanatics, so these things make us all less free, but more on that later.

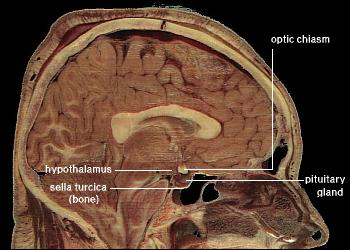

The clue was Bayes’ rule. As we and others have argued elsewhere, there are only so many ways you can design an intelligent system. Convergent evolution requirements will dictate that such systems use statistical inference, and specifically Bayes’ rule is essentially any such system. There is growing evidence in neuroscience that the human brain does, indeed, use Bayes’ rule, hardcoded by evolution. IBM Watson thus necessarily makes use of Bayes’ rule as one of many parts of a complex chain of statistical reasoning and machine learning heuristics.

Related posts:

1 2

- Tagged: art, artificial intelligence, artwork, blue, business, data, figure, historical, intelligent, light, more, novel, photo, science, singularity, us, water, watson

- 2

- 2

Search API will now always return "real" Twitter user IDs. The with_twitter_user_id parameter is no longer necessary. An era has ended. ^TS

— Twitter API (@twitterapi)November7, 2011

There are 2 comments so far

Leave a Comment

Don't worry. We never use your email for spam.Recent Comments

- florimee on genetic disease turns you into a real-life vampire

- Acculation on Alien Pioneer plaque starmap to 3D printed jewelry transmedia: maker movement data-driven multiplatform media

- Acculation on Free Video Data Science Assessment Tool

- Acculation on Free Business Advice Chatbot Product

- Acculation on Online Consultation with Dr. Krebs (Big Data and Management Consulting)

[…] out our more recent post on Watson, which includes a selection of Ted Talks on Watson. This also talks about Watson’ use of distance matrices in statistical inference, […]

[…] AI (or GOFAI) has it’s uses. As we’ve previously argued in our discussion on Bayes’ Rule and IBM Watson, statistical inference is much more computationally expensive that GOFAI. We noticed a decade ago […]